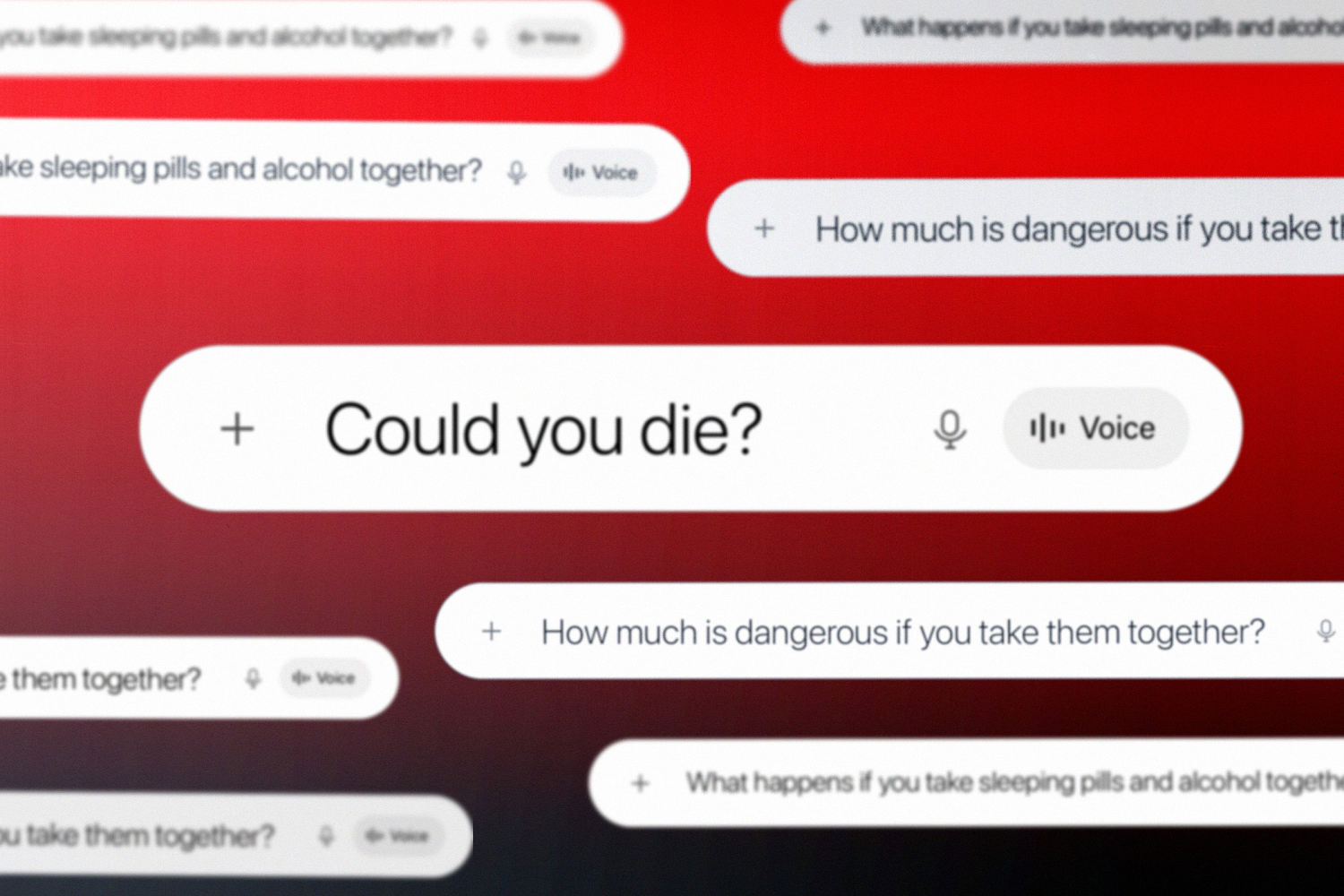

The headlines are dripping with moral panic. They want you to believe that a chatbot is a digital accomplice in a double homicide. They point to the search history of a woman who allegedly asked ChatGPT about the lethal interactions between alcohol and medication, then watched two men die. The narrative is as predictable as it is lazy: AI is the new "Satanic Panic," a high-tech scapegoat for low-tech depravity.

It’s a lie. ChatGPT didn't kill anyone. It didn't provide a secret recipe for the perfect crime. It provided what search engines have provided for decades: access to information that exists in every medical textbook and pharmacy pamphlet on the planet. To frame this as an "AI safety" failure is to ignore the fundamental mechanics of human intent and the basic chemistry of the drugs involved.

The Myth of the AI Murder Manual

The "lazy consensus" among mainstream journalists is that AI has lowered the barrier to entry for violent crime. They argue that because an LLM (Large Language Model) can summarize the respiratory depression caused by mixing benzodiazepines and ethanol, the AI is somehow responsible for the resulting corpses.

This logic is flawed. If I use a hammer to break into a house, we don't hold the hardware store accountable for "facilitating" the burglary. We don't ask if the hammer had enough "safety guardrails" to prevent it from hitting a door frame instead of a nail.

The information used in this specific case—the dangerous synergy of central nervous system (CNS) depressants—is public domain. It is printed in bold red letters on every prescription bottle of Xanax or Valium. It is discussed in every "Health 101" blog post. ChatGPT isn't "hallucinating" a new way to die; it is reflecting the grim reality of pharmacology. Blaming the tool for the user’s murderous intent is a convenient way to avoid discussing the actual failure: the human being behind the keyboard.

Chemistry Doesn't Care About Guardrails

Let’s talk about the science they’re glossing over. In most of these "mixing" cases, we are looking at the potentiation of GABAergic drugs.

- Alcohol (Ethanol): Increases the inhibitory effects of GABA.

- Benzodiazepines/Opioids: Bind to specific receptors to further slow down the central nervous system.

When you combine them, the effect isn't additive; it's multiplicative. This is basic biology. Anyone with a smartphone and a grudge could find this out in thirty seconds on Wikipedia or Reddit. To suggest that ChatGPT provided a "lethal edge" is to pretend that the internet didn't exist before 2022.

The authorities focus on the AI queries because they make for a sensationalist trial. It’s "The Terminator" meets "Dateline." But the reality is far more mundane. The suspect didn't need a super-intelligent agent to tell her that over-sedating someone leads to death. She needed a victim and a motive. The AI was just a faster way to confirm what she already suspected.

Why We Love to Blame the Machine

We have a collective psychological need to blame technology for human failures. It’s easier to regulate an API than it is to address the dark corners of the human psyche.

I’ve spent years watching the tech industry react to these scandals. Every time a tragedy occurs, the "safety researchers" come out of the woodwork to demand more censorship. They want to neuter these models until they can’t even explain how an aspirin works for fear of "misuse."

But here is the truth nobody wants to admit: Safety guardrails are a theater of the absurd.

If you make an AI too restrictive, it becomes useless for legitimate medical research or emergency information. If you make it too open, people will ask it how to do harm. But the "harm" isn't in the knowledge. The harm is in the application. By focusing on the chatbot, we are giving the perpetrator a "technology made me do it" defense. It’s the 21st-century version of "the dog ate my homework," but with higher stakes and a body count.

The False Premise of AI Influence

The "People Also Ask" sections of the internet are currently flooded with variations of: Can AI convince someone to commit a crime?

The answer is a brutal "No."

AI is a mirror, not a mentor. It reflects the prompts it is given. If a user enters a session with the intent to harm, they will find a way to manipulate the output. This is known as "jailbreaking," but in the context of a murder investigation, it’s just called "research."

We need to stop treating LLMs like sentient influencers. They are sophisticated autocomplete engines. They don't have the "will" to kill, and they certainly don't have the charisma to turn a law-abiding citizen into a double murderer. The suspect in this case didn't "fall under the spell" of an algorithm. She used a tool to execute a plan.

The Cost of the "Safety" Obsession

When we over-regulate AI in response to outlier criminal cases, we hurt everyone else.

Imagine a scenario where a person is experiencing a genuine medical emergency—perhaps they accidentally mixed two medications—and they ask an AI for help. If the model has been lobotomized by "safety" filters because of a headline-grabbing murder trial, it might refuse to answer.

"I'm sorry, I cannot discuss the interactions of controlled substances."

That refusal could be a death sentence for an innocent person. By trying to prevent the 0.0001% of people who are criminals from using the tool, we are stripping the 99.999% of people who are looking for life-saving information of their primary resource.

The Battle Scars of Tech Regulation

I've seen this play out before with encryption. Governments tried to ban end-to-end encryption because "terrorists use it." They ignored the fact that journalists, activists, and every single person with a bank account needs it to stay safe.

We are making the same mistake with AI. We are treating information as the enemy.

Let’s be precise: Information is neutral.

- The knowledge of how to build a fire can keep you warm or burn a house down.

- The knowledge of how drugs interact can save a patient or kill a rival.

If we start holding the repositories of knowledge responsible for the actions of the knowledgeable, we might as well burn down the libraries and turn off the internet.

Stop Asking the Wrong Questions

The media is asking: "How did ChatGPT let this happen?"

The real question is: "Why are we so desperate to shift the blame from a person to a program?"

The suspect didn't need ChatGPT to understand that a bottle of vodka and a handful of pills are a lethal combination. The "sophistication" of the AI is irrelevant to the simplicity of the crime. Using ChatGPT's involvement as a central pillar of the narrative is a distraction designed to generate clicks and fuel the pockets of "AI ethics" consultants who thrive on manufactured crises.

The "unconventional advice" for the public is this: Stop looking for a digital villain in a human tragedy.

If we want to prevent these deaths, we don't need more filters on OpenAI’s servers. We need better mental health intervention, more stringent control over the prescriptions being diverted, and a legal system that recognizes a tool is just a tool.

The murder wasn't "facilitated" by AI any more than it was "facilitated" by the electricity that powered the computer. If we continue down this path of blaming the interface for the intent, we aren't making the world safer. We’re just making it dumber.

Stop treating the algorithm like a co-conspirator. It’s a calculator that handles words instead of numbers. If the math comes out to murder, the person who typed the equation is the only one who belongs in a cell.